Protecting Data in Use for Healthcare Analytics and AI

Overview

MediSure Diagnostics is an anonymized regional healthcare network operating imaging centres, outpatient clinics, and a growing analytics practice. The organization processes protected health information across clinical reporting, claims-quality review, AI-assisted image triage, and research workflows. As demand grew, leadership wanted cloud elasticity and faster experimentation, but security and compliance teams remained uncomfortable with one unresolved gap: Once data reached memory for processing, conventional encryption no longer protected it.

The brief was not simply to “move to cloud.” It was to prove that sensitive healthcare workloads could run in public cloud infrastructure without exposing data in use to unnecessary risk, including administrator-level access, misconfiguration, or overly broad trust in the platform. We designed a confidential-computing landing zone that combined hardware-backed trusted execution environments, attestation-gated key release, segmented data flows, and auditable operations. The result was a practical path to cloud for sensitive workloads that had previously been blocked or delayed.

Instead of treating confidential computing as a niche experiment, we positioned it as a business-enabling security control. The project gave the client a way to accelerate healthcare analytics, support privacy-preserving AI initiatives, and reduce review friction between platform, security, and compliance teams, all without pretending that traditional encryption or perimeter controls were enough on their own.

Quick Stats (12 Week Program)

- 68% faster approval cycle for sensitive analytics workloads

- 84% of targeted PHI jobs moved to protected cloud execution

- 37% less manual audit-evidence collection effort

- 9% median performance overhead on the protected workload tier

Challenges

Primary Challenge

The client faced a critical decision: Keep highly sensitive analytics on constrained on-premises infrastructure, or redesign their cloud approach so protected health information could be processed with stronger runtime assurances. The issue was not lack of cloud tooling. They already had encryption at rest, TLS in transit, identity controls, and logging. What they lacked was confidence that sensitive workloads would remain protected while the data was actively being computed.

Core Challenges

1) Data-in-use exposure:

Clinical records, image metadata, and model features had to be decrypted in memory for analytics and inference. That blind spot made the most sensitive workloads difficult to approve.

2) Operational trust boundaries:

Security teams wanted to reduce dependence on broad trust in hypervisors, platform administrators, and privileged tooling. The concern was not only external attackers, but also insider risk and accidental exposure.

3) Compliance and audit friction:

Each new workload required slow, manual evidence gathering across architecture diagrams, key-management controls, access paths, and change records. This delayed both innovation and regulatory sign-off.

4) Integration complexity:

The environment had to connect with EHR feeds, imaging systems, claims workflows, identity systems, and downstream analytics services without leaking sensitive context into less trusted layers.

5) Performance sensitivity:

Image triage and claims-quality analytics could not absorb major runtime penalties. The client needed stronger protection without turning every sensitive job into a slow batch process.

Why This Mattered

Why this mattered went beyond security language. Delayed workload approvals meant slower research partnerships, slower AI experimentation, and more continued spending on tightly controlled legacy infrastructure. Clinical and operations teams were ready to move faster, but the control environment had become the bottleneck. The client needed an architecture that gave security teams stronger evidence, rather than just stronger promises.

Strategy

Strategic Approach Overview

We approached the engagement with one principle: Confidential computing should extend the security model, not replace it. The goal was never to claim that a trusted execution environment solves every risk. Instead, we used confidential computing where it adds the most value by protecting code and data while they are being processed, and allowing secrets to be released only after the runtime proves it is in a known-good state.

We also avoided an all-or-nothing redesign. Rather than forcing the client to rewrite every workload into enclave-specific code, we used a practical hybrid pattern. The most sensitive analytics services were deployed on confidential virtual machines for broader compatibility, while enclave-backed services handled key mediation, cryptographic operations, and highly restricted policy checks. That gave the client a realistic migration path with stronger assurance and less engineering disruption.

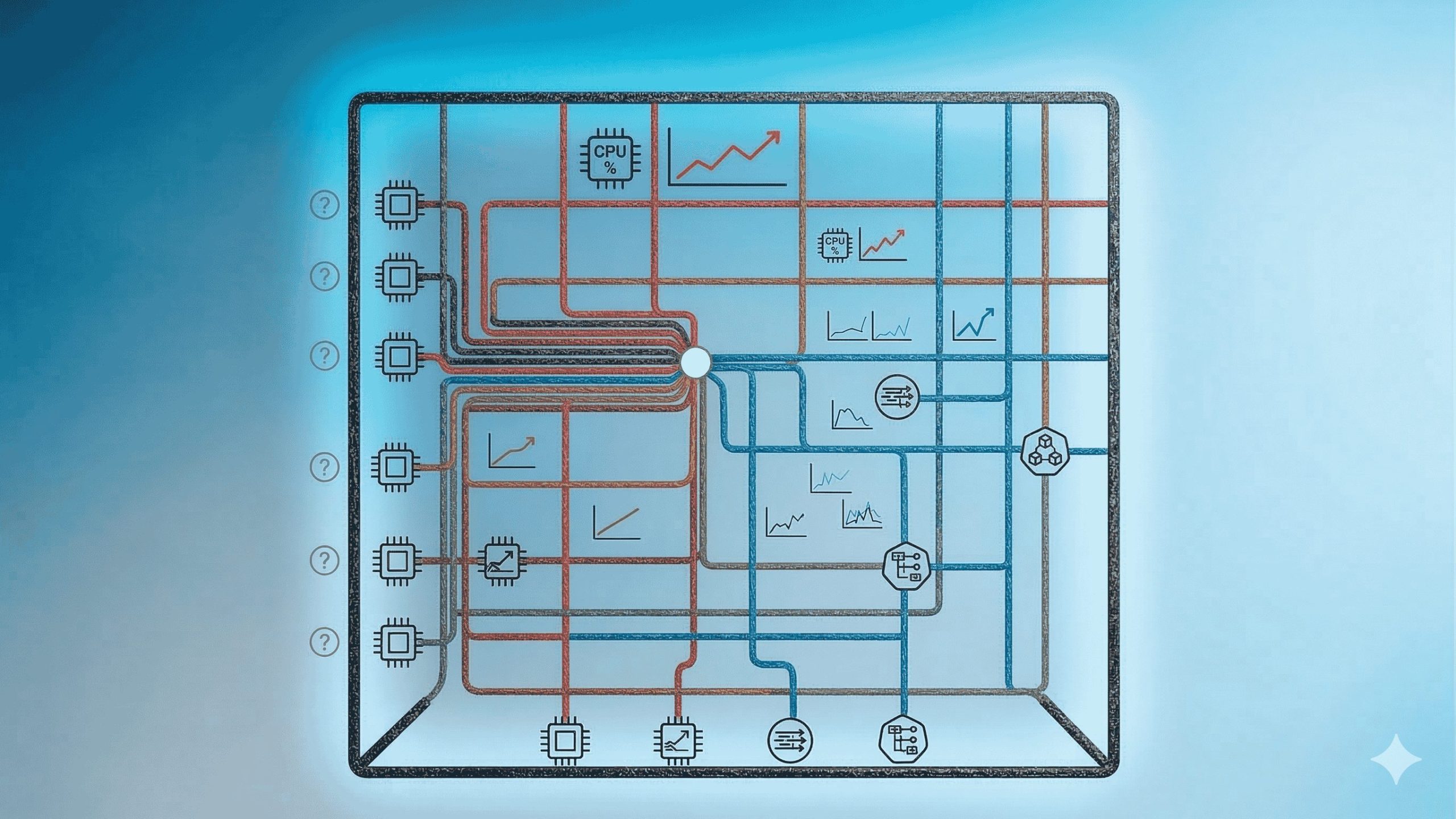

Solution Architecture (Layer-Based Breakdown)

1) Protected Compute Layer

Confidential VMs hosted the analytics and inference services that process PHI, giving the client hardware-backed memory protection and stronger isolation for data in use.

2) Trust and Key Release Layer

Remote attestation verified workload measurements before secrets were released. Key access depended on policy, approved images, and known-good boot state rather than blind infrastructure trust.

3) Data and Integration Layer

Tokenization, encrypted object storage, EHR connectors, imaging metadata services, and claims interfaces were separated so PHI stayed in the protected path while lower-sensitivity data could flow into standard services.

4) Observability and Audit Layer

Logs, traces, and security events were redesigned so operational telemetry remained useful without leaking sensitive payloads. Audit evidence was mapped directly to attested build and deployment records.

Implementation Highlight

Attestation was required before any secret release. Build artifacts, boot measurements, and approved runtime policies were bound to key access, ensuring that a workload could not decrypt PHI or service credentials unless it first proved that it was running inside the expected trusted execution environment. To minimize unnecessary exposure of sensitive information, the data architecture introduced PHI-aware segmentation. Fully identifiable data, tokenized data, and de-identified datasets were separated into different processing paths, reducing the amount of information that actually required confidential execution while keeping cost and operational complexity manageable.

The modernization approach was deliberately incremental. The client adopted confidential virtual machines for compatibility with their existing services while reserving enclave-specific code for only the most sensitive trust functions. This approach avoided the risks and expense of a complete system rewrite. Observability was also redesigned with privacy in mind. Logs, metrics, and traces were preserved for SRE and security teams, but they were structured to emit metadata, status signals, and correlation identifiers rather than raw payloads, ensuring PHI remained protected without limiting operational visibility. Finally, deployment controls were implemented using a policy-as-code approach, where infrastructure definitions, attestation expectations, network policies, and key management rules were codified. This allowed the secure architecture to be reused consistently for future workloads rather than rebuilt each time.

Methodology and Delivery

We used a phased 12-week delivery model designed to create both technical confidence and governance confidence:

Weeks 1-2: Discovery and threat modelling: Mapped PHI flows, risk assumptions, workload eligibility, performance sensitivity, and approval requirements with security, compliance, and engineering stakeholders.

Weeks 3-5: Landing zone and trust controls: Built the confidential workload foundation, network segmentation, attestation flow, key-release policy, and baseline audit logging.

Weeks 6-9: Pilot workload migration: Moved a claims-quality analytics service and an AI-assisted imaging triage workflow into the protected cloud pattern, with controlled test data followed by restricted production traffic.

Weeks 10-11: Validation and hardening: Ran performance tests, control validation, recovery drills, access reviews, and evidence walkthroughs for compliance stakeholders.

Week 12: Phased production rollout: Promoted the pattern into first-phase production and documented the blueprint so additional workloads could be onboarded with far less review friction.

Testing and validation were as important as the build itself. We validated attestation behaviour, measured performance deltas, verified policy-bound key release, tested failure and rollback paths, and confirmed that no sensitive payloads appeared in external logs or operational dashboards.

Results

Impact Summary

Within the first 90 days of pilot-to-production use, the confidential-computing platform changed how the client evaluated sensitive cloud workloads. Security teams moved from exception handling to repeatable approval. Engineering teams moved from waiting on environment reviews to deploying into a pattern that already carried evidence, policy, and controls with it.

Business and Operational Impact

The implementation delivered measurable improvements across both security operations and research productivity. Workload approval cycles became significantly faster, with security and compliance reviews for eligible analytics services improving by 68%. Instead of multi-week approval loops, teams could rely on repeatable control reviews supported by pre-mapped compliance evidence. Audit preparation also became more efficient. Because attestation records, build provenance, and deployment policies were automatically linked, the organization reduced manual audit effort by 37%, eliminating the need for time-consuming screenshot collection and manual proof gathering.

Operationally, the platform accelerated research and analytics work. Approved teams were able to provision protected analytics environments more quickly, resulting in a 52% faster turnaround for research datasets and enabling internal data science teams and external research partners to generate insights sooner. At the infrastructure level, the organization successfully migrated 84% of targeted PHI workloads from a restricted on-premise processing queue to protected confidential cloud environments, achieving higher processing capacity without weakening the organization’s security or compliance posture.

What Changed (Before vs After)

Before the project, the cloud conversation focused on what could go wrong: who might access memory, how secrets would be controlled, whether audit teams would accept the design, and whether performance would collapse under stronger isolation.

After rollout, the conversation changed to workload eligibility, blueprint reuse, and where confidential computing should be applied next. That is an important shift. The organization did not simply deploy a new security feature; it created a repeatable operating model for sensitive cloud adoption.

Stakeholder Feedback (Client Voice)

“Confidential computing gave us a middle path we did not have before. We no longer had to choose between keeping everything on tightly controlled legacy infrastructure or taking on more runtime trust than we were comfortable with.” – Director of Cloud Security

Long-Term Value

The client now has more than a single successful deployment. They have a reusable blueprint for future workloads involving AI inference, privacy-preserving research collaboration, and cross-functional analytics. That blueprint reduces onboarding time for new teams, improves the quality of security conversations, and creates a stronger foundation for regulated innovation in the cloud.

1) How is confidential computing different from encryption at rest and in transit?

Encryption at rest protects stored data, and encryption in transit protects data moving across networks. Confidential computing addresses the third state: Data in use. It does that by executing code inside a hardware-backed trusted execution environment so memory contents and runtime state receive stronger protection while the workload is actively processing sensitive information.

2) What technologies powered the solution?

The solution used a confidential virtual machine pattern for protected compute, remote attestation to verify the runtime, KMS or HSM-backed key release tied to approved measurements, tokenization for data minimization, and policy-as-code for repeatable deployment. The design was intentionally portable across the major cloud confidential-computing patterns rather than being over-optimized for a single product feature.

3) Why not put everything inside an enclave?

Because that would have increased delivery time, engineering risk, and operational complexity. A full enclave rewrite is sometimes appropriate for narrowly scoped cryptographic or high-assurance services, but most healthcare workloads need a more pragmatic path. Using confidential VMs for broader compatibility and enclaves for the most sensitive trust functions provided a better balance of assurance and practicality.

4) How were secrets and keys protected?

Secrets were not released based only on IAM permissions or network location. The runtime first had to produce attestation evidence showing that it was the expected workload image running in the expected trusted environment. Only then could policy allow decryption or key operations. This reduced the chance that copied images, drifted hosts, or unapproved runtime states would gain access to sensitive material.

5) What was your approach to logging and monitoring without exposing PHI?

We treated observability as a design problem, not an afterthought. Application telemetry was redesigned to emit correlation IDs, status codes, measurements, and control events instead of raw payloads. Security teams still received the signals they needed for monitoring and incident response, while sensitive content remained inside the protected path or in tightly controlled stores.

6) Did confidential computing create a large performance penalty?

There was measurable overhead, but it was manageable and predictable. In this scenario, the median impact stayed within a planned 9% band for the first wave of protected workloads, which the client accepted because the gain in runtime assurance unblocked workloads that previously could not move at all. The right question is not whether there is any overhead, but whether the security value justifies it for the workload.

7) What made this approach innovative for the client?

Innovation came from combining security architecture with delivery pragmatism. Instead of treating confidential computing as a lab experiment, we made it part of an operational blueprint with attestation, evidence mapping, deployment policy, and workload segmentation built in. That is what turned a promising technology into something the business could actually adopt.